What are the algorithms used in machine learning?

What are the algorithms used in machine learning?, In less than a minute, this article will explain some of the most popular machine learning algorithms, making them easier to understand for everyone.

1. Linear Regression

Linear Regression, one of the most basic machine learning algorithms available, is used to predict continuous dependent variables using data from independent variables.

An effect that depends on changes in the independent variable is referred to as a dependent variable.

You might be familiar with the line of greatest fit from your time in school; linear regression results in this. One straightforward illustration is estimating someone’s weight based on their height.

2. Logistic Regression

Similar to linear regression, logistic regression uses knowledge of independent factors to generate predictions on categorical dependent variables.

Two or more categories make up a categorical variable. Using logistic regression, outputs that can only fall between 0 and 1 are classified.

For instance, you can use Logistic Regression to determine, based on a student’s grades, whether or not they would be admitted to a specific college – either Yes or No, or 0 or 1.

3. Decision Trees

Decision Trees (DTs) are a type of probability tree-like structural model that constantly divides data into categories or predicts outcomes based on the answers to earlier inquiries.

In order to assist you in making better judgments, the model learns the characteristics of the data and provides replies.

As an illustration, you can use a decision tree with the options Yes or No to identify a certain species of bird using information about its feathers, ability to fly or swim, kind of beak, etc.

4. Random Forest

Random Forest is also a tree-based algorithm, much like Decision Trees. Random forest employs several decision trees a forest of trees instead of a single Decision Tree, which it uses to make judgments.

It may be used for Classification and Regression problems and combines different models to create predictions.

5. K-Nearest Neighbors

K-Nearest Neighbors determines if two data points may be grouped together by using statistical knowledge of how near they are to one another. The proximity of the data points illustrates how similar they are to one another.

For instance, suppose that we had a graph with two groups of data points: Group A, which was a close-knit group of data points, and Group B, which was also a close-knit group of data points.

The new classed category for each new data point that is entered depends on which group it is closest to.

6. Support Vector Machines

Support Vector Machines perform classification, regression, and outlier detection tasks in a manner similar to Nearest Neighbor.

It accomplishes this by dividing the classes using a hyperplane (a straight line). Group A will be used to identify the data points that are situated on one side of the line, and Group B will be used to identify the points that are situated on the opposite side.

For instance, the group to which a newly entered data point belongs will depend on which side of the hyperplane it is on and where it is within the margin.

What are the algorithms used in machine learning?

7. Naive Bayes

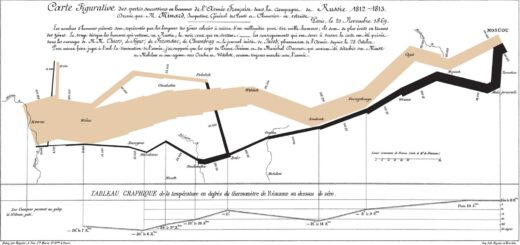

The Bayes Theorem, a mathematical formula for estimating conditional probabilities, is the foundation of Naive Bayes. The probability of an outcome happening given that another event has also happened is known as conditional probability.

It asserts that each class’s probabilities belong to a specific class and that the class with the highest probability is regarded as the most probable class.

8. k-means Clustering

Similar to nearest neighbors, K-means clustering groups similar objects or data points in clusters using the clustering algorithm. The term “K” refers to the number of groupings.

This is accomplished by choosing the k value, initializing the centroids, choosing the group, and calculating the average.

If there are three clusters, for instance, and a new data point is entered, the cluster to which it belongs will depend on which cluster is present.

9. Bagging

Bagging is an ensemble learning technique also referred to as Bootstrap aggregating. In order to prevent overfitting of the data and lessen the variation in the predictions, bagging is a technique used in both regression and classification models.

Overfitting, which occurs when a model fits exactly to its training data and is caused by a variety of factors, essentially teaches us nothing. Bagging uses Random Forest as an illustration.

10. Boosting

Boosting’s overarching goal is to transform weak learners into strong learners. By using base learning algorithms, weak learners are discovered, which creates a new weak prediction rule.

XGBoost, which stands for Extreme Gradient Boosting, is used in Boosting. A random sample of data is fed into a model, and then it is trained consecutively with the goal of training the weak learners and attempting to fix its predecessor.

11. Dimensionality Reduction

By lowering the dimension of your feature set, dimensionality reduction is used to decrease the number of input variables in the training data.

The complexity of a model increases with the number of features, increasing the risk of overfitting and decreasing accuracy.

The number of columns in a dataset with 100 columns, for instance, would be reduced to 20 using dimensionality reduction.

However, in order to choose pertinent features and create new features from already existing features, you will need feature engineering.

Dimensionality reduction techniques include the Principal Component Analysis (PCA) method.

Conclusion

This article’s goal was to provide you with the clearest understanding possible of machine learning algorithms. Have a look at these Popular Machine Learning Algorithms if you want to learn more about each of them in detail.