Applying the Central Limit Theorem in R

Applying the Central Limit Theorem in R, The central limit theorem states that if the sample size is high enough, the sampling distribution of a sample mean is approximately normal, even if the population distribution is not.

The sample distribution will also have the following properties, according to the central limit theorem:

1. The sample distribution’s mean will be equal to the population distribution’s mean.

x = μ

2. The sampling distribution’s standard deviation will be equal to the population distribution’s standard deviation divided by the sample size.

Aesthetics must be either length 1 or the same as the data » finnstats

s = σ / n

Example: Applying the Central Limit Theorem in R

The central limit theorem is demonstrated in R using the following example.

Assume that the width of a turtle’s shell is distributed uniformly, with a minimum width of 1.2 inches and a maximum width of 6 inches.

That is, if we chose a turtle at random and measured the width of its shell, it could be anywhere between 1.2 and 6 inches wide.

The following code demonstrates how to generate a dataset in R that contains the measurements of 1,000 turtle shell widths, uniformly dispersed between 1.2 and 6 inches.

LSTM Network in R » Recurrent Neural network » finnstats

Let’s make this example reproducible while using set.seed function.

set.seed(123)

Let’s make a uniformly distributed random variable with a sample size of 1000.

data <- runif(n=1000, min=1.2, max=6)

Now we can visualize the distribution of turtle shell widths using a histogram.

hist(data, col='steelblue', main='Histogram of Turtle Shell Widths')

It’s worth noting that the distribution of turtle shell widths isn’t at all normally distributed.

Now imagine that we choose 10 turtles from this population at random and measure the sample mean over and over again.

The following code demonstrates how to do this in R and view the distribution of sample means with a histogram:

To keep the sample means, create a blank vector.

sample <- c()

take 1,000 random samples of size n=10

n<-1000

for (i in 1:n){

sample[i] = mean(sample(data, 10, replace=TRUE))

}calculate the sample mean and standard deviation.

Boosting in Machine Learning-Complete Guide » finnstats

mean(sample) [1] 3.579381

sd(sample) [1] 0.4326929

To visualize the sampling distribution of sample means, make a histogram.

hist(sample, col ='red', xlab='Turtle Shell Width', main='Sample size = 30')

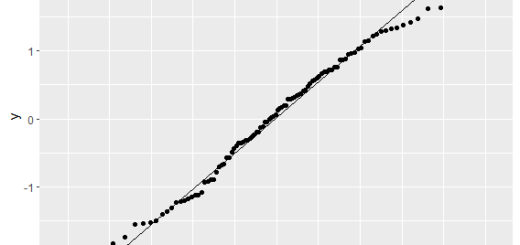

Even though the distribution from which the samples originated was not normally distributed, the sampling distribution of sample means seems to be regularly distributed.

The sample mean and sample standard deviation for this sampling distribution are also worth noting.

x̄: 3.6 s: 0.43

Now, let’s say we increase the sample size from 10 to 50 and generate the sample means histogram.

To hold the sample means, create a blank vector.

sample50 <- c()

take 1,000 random samples of size n=50

n = 1000

for (i in 1:n){

sample50[i] = mean(sample(data, 50, replace=TRUE))

}To visualize the sampling distribution of sample means, make a histogram.

hist(sample50, col ='steelblue', xlab='Turtle Shell Width', main='Sample size = 50')

mean(sample50) 1] 3.589215 sd(sample50) [1] 0.1972805

The sampling distribution is once again normal, the mean is nearly identical, and the sample standard deviation is even smaller.

This is due to the fact that we employed a bigger sample size (n = 50) than in the previous case (n = 10), resulting in a lower standard deviation of sample means.

How to Read rda file in R (with Example) » finnstats

We’ll notice that as we use larger and larger sample sizes, the sample standard deviation gets lower and smaller.

This is a practical example of the central limit theorem.

As a result, the larger the sample size, the better. The sample size can be determined while taking care of adequate test power.